JustDone AI Detector Review 2026: Is It Accurate? Shakespeare Flagged 74% AI (Plus JustDone vs Turnitin)

Is JustDone AI Detector accurate? I fed it Shakespeare — it flagged 74% AI while GPTZero said 100% human. Full 2026 review: accuracy test, false-positive rate, JustDone vs Turnitin, and whether you should trust the score before submitting.

If you've been searching "JustDone AI detector" or wondering whether you can trust JustDone's detection score — here's a quick reality check.

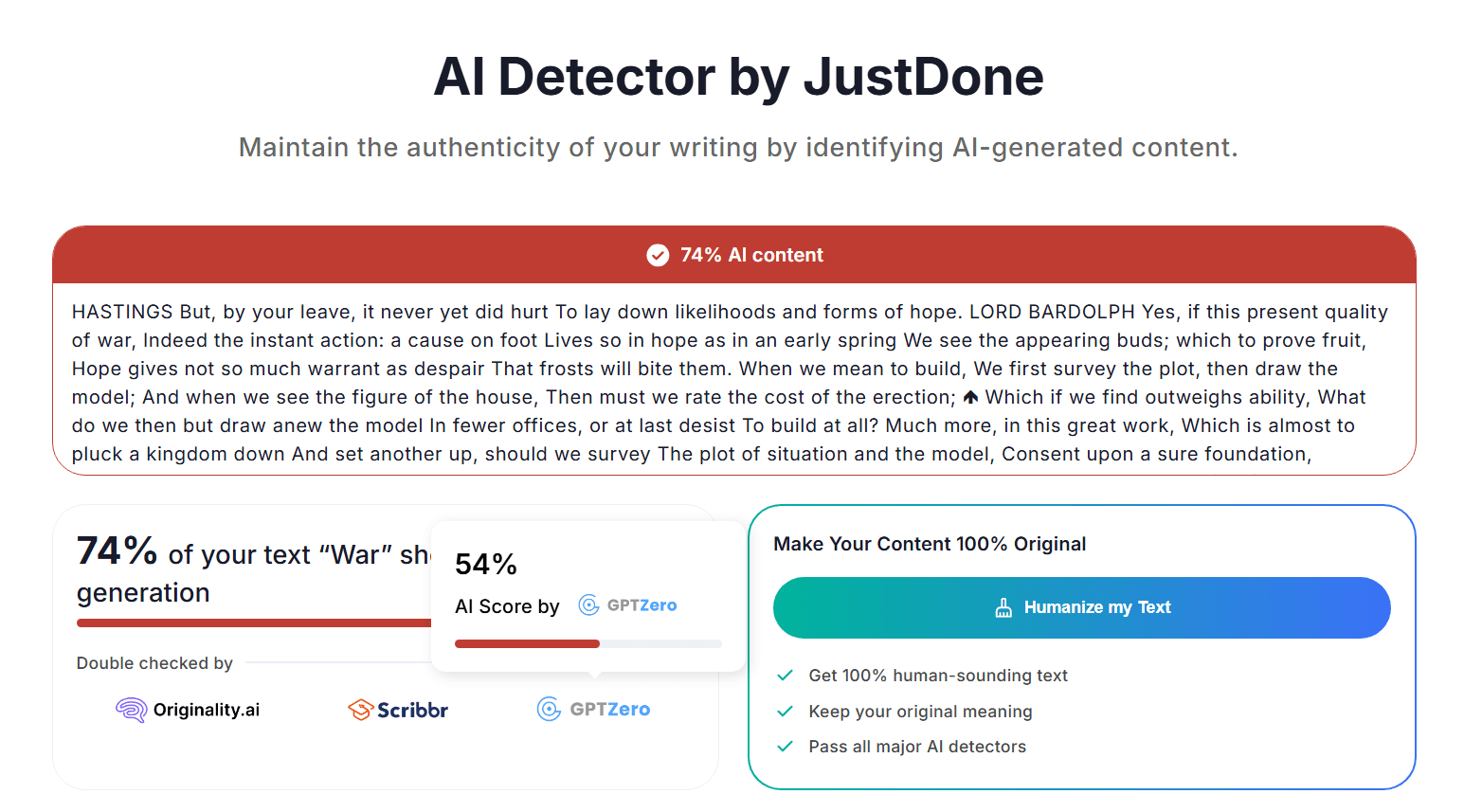

I took a Shakespeare excerpt (public domain, undeniably human-written, centuries older than anything an AI could have produced) and pasted it into JustDone's AI Detector. The result:

- JustDone flagged it as 74% "AI content" (screenshot below)

- The page immediately pushed a "Humanize my Text" button with promises like "pass all major AI detectors"

- It also claimed the result was "double checked" by other services

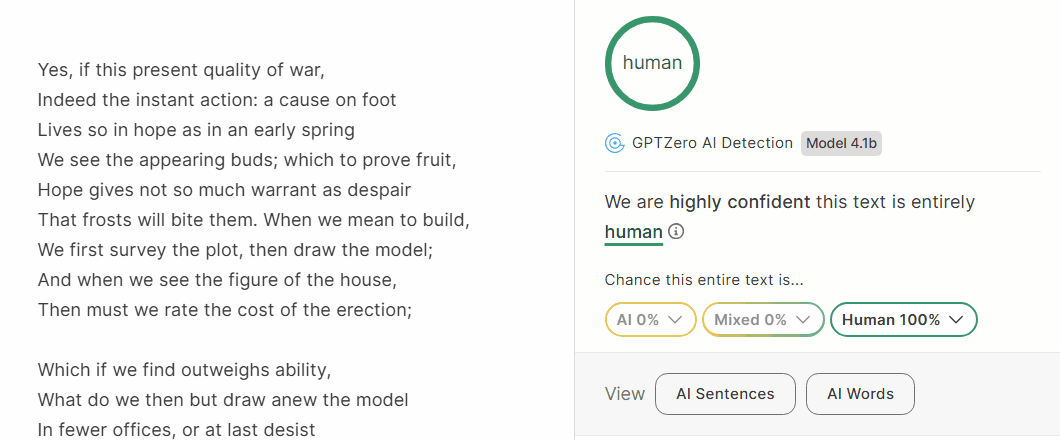

Out of curiosity, I ran the exact same excerpt through GPTZero directly. GPTZero's verdict: Human 100%.

So at bare minimum, JustDone's score on this test is a false positive — and the "other detectors agree" messaging doesn't hold up the moment you actually cross-check.

Note: AI detectors change over time. This post isn't about proving any platform is "always" wrong. It's about a simple, reproducible sanity check and the incentive problem it reveals.

Is JustDone AI Detector Accurate? The Short Answer

No — at least not reliably enough to base academic submission decisions on. On our test, JustDone labelled a Shakespeare excerpt as 74% AI — text that predates every modern language model by roughly four centuries. On the same day, GPTZero scored the same text as 100% human. If a detector gets Shakespeare wrong while claiming other platforms "double checked" the result, its verdict on your essay is worth treating as, at best, a weak signal.

Is JustDone good for anything? As a quick "does this paragraph sound too generic" second opinion, sure — any detector can prompt you to look at a specific passage. But a JustDone percentage is not a pass/fail number, and it does not tell you what your university's Turnitin report will look like. If your university uses Turnitin, that is the benchmark that actually decides your grade. See our AI detector comparison for the full breakdown.

Why the "Shakespeare Test" works as a sanity check

AI detection is probabilistic. These tools aren't proving who wrote something — they're estimating whether a text looks like common AI patterns.

So the best way to sanity-check a detector isn't using text that "feels human." It's using text that is temporally impossible for modern AI to have generated: Shakespeare via Project Gutenberg, academic papers from before 2019, older published literature. Anything that predates GPT and large language models.

A serious detector should confidently classify this kind of text as human-written. If it doesn't — if it flags Shakespeare as 74% AI — that's not a minor technical glitch. That's a loud signal that something is fundamentally off.

What JustDone showed: 74% AI and a "Humanize" upsell

Screenshot: JustDone labels the Shakespeare excerpt as 74% "AI content" and promotes "Humanize my Text".

Screenshot: JustDone labels the Shakespeare excerpt as 74% "AI content" and promotes "Humanize my Text".

Even before you dig into the algorithm, the page design tells its own story. You get a big red score (designed to create anxiety), then a promise of an instant fix ("keep meaning", "pass major detectors"), and the primary action on the page is the "Humanize" button.

That's not just a technical issue — it's a business model issue. When detection output is tightly coupled to selling a rewriting product, keeping false positives low stops being the priority. In fact, false positives are good for business: more alarming scores means more clicks on "Humanize."

"Other detectors agree"? Let's check.

JustDone's interface implies the result was "double checked" by other services. That sounds reassuring. But here's what happened when I tested the same excerpt directly in GPTZero:

Screenshot: GPTZero (Model 4.1b) labels the same text as Human 100%.

Screenshot: GPTZero (Model 4.1b) labels the same text as Human 100%.

| Platform | Result on the same Shakespeare excerpt | What it means |

|---|---|---|

| JustDone | 74% AI content | Clear false positive |

| GPTZero | Human 100% | JustDone's score isn't consensus |

I want to be fair here: GPTZero isn't always right either. No detector is. But the point is that if JustDone suggests other services agree, and your own cross-check shows they don't, the trust disappears pretty quickly.

Why this matters for students

When AI detection scores are this unreliable, students get pushed into two bad places:

False reassurance — you get a low score on some detector and treat it as a green light, even though your university uses a completely different tool.

False panic — you get a high score and either spiral into anxiety or start making risky decisions like running your essay through "humanizer" tools, which can actually create new problems.

In reality, universities don't judge your work based on a random website's score. They care about policy compliance, academic integrity, whether your writing process is explainable (do you have outlines, drafts, sources, citations, version history?), and whether you used prohibited rewriting services.

Related reading:

The red-score-then-paid-fix pattern

Every detector can make mistakes — Turnitin included. That's normal. But there's a difference between "occasionally wrong" and "wrong in a way that drives revenue."

Be cautious whenever you see a platform that combines: obvious false positives on text that is clearly human-written, messaging that implies external validation, and an immediate upsell to a paid "humanizer" or rewriter.

That pattern — create anxiety, then sell relief — is worth recognising, because it shows up across quite a few consumer AI detection sites, not just JustDone.

What to do instead

If your goal is reducing submission risk without getting into dodgy territory, here's a more grounded approach:

Match the system your university uses. If it's Turnitin, align to that. Don't spend energy chasing scores on tools your institution doesn't use. See Turnitin's official site.

Keep a defensible writing trail. Outline, drafts, notes, sources, citations, version history (Google Docs or Word Track Changes). If you're ever asked "how did you write this?", being able to show your process is worth more than any detector score.

Cross-check if you want, but don't worship scores. If multiple tools flag the same paragraphs, that's useful — it tells you those sections might sound too generic. Revise them by adding specificity: course concepts, evidence, examples, and your own reasoning.

Avoid "humanizer" rewriting. It can raise academic integrity risk and often doesn't even reduce detector scores consistently. It's a lose-lose.

If you want a pre-submission check that's closer to what your university actually uses, we can provide a Turnitin AI detection report for your draft.

Bottom line

The Shakespeare test is simple, and the result speaks for itself. JustDone produced a glaring false positive on text that is centuries old and undeniably human-written, while steering users toward a paid "Humanize" product. For students who are trying to make decisions about their academic submissions, that's not a tool you can rely on.

Treat any AI detector score as a signal, not a verdict. Your best protection isn't a clean score on a website — it's aligning to your university's system and keeping a writing process you can explain if anyone asks.

FAQ

JustDone vs Grammarly for academic writing: which should you trust?

They do completely different things. Grammarly is a writing assistant — it helps with clarity, tone, and grammar. JustDone positions itself as an AI detector and "humanizer" funnel. For actually improving your academic writing, Grammarly (plus proper citations and a defensible writing trail) is more useful. A JustDone percentage score — especially one that flags Shakespeare as AI — shouldn't influence your submission decisions.

GPTZero vs Grammarly: are they interchangeable?

Not at all. GPTZero is an AI detection tool. Grammarly is a writing quality tool. They solve different problems. A sensible student workflow uses Grammarly to improve readability, cross-checks with a detector or two for signals (not guarantees), and keeps drafts and version history so the writing process is explainable.

GPTZero vs JustDone: why do they give opposite results?

In our test, JustDone labelled Shakespeare as 74% AI and displayed "Double checked" messaging implying other tools agreed. GPTZero labelled the same text as 100% human. So it's not just that different models produce different scores — it's that JustDone used cross-check language to make a false positive seem validated, and then pushed a paid "Humanize" feature as the solution.

Different detectors will always disagree to some extent (different models, different thresholds). But implying third-party agreement when the cross-check doesn't match is a credibility issue, not just a technical one.

Turnitin vs JustDone: which one matters for students?

If your university uses Turnitin, Turnitin is your benchmark. Not a free consumer website. Turnitin isn't perfect either, but it's built for institutional workflows and aims to keep false positives low. For a detailed breakdown, see our guide on Best AI Detector for Students.

Need a Turnitin AI Report Before You Submit?

Consumer AI detector scores often conflict and can include obvious false positives. If your university uses Turnitin, Purply can provide a real Turnitin AI detection report before submission—so you can revise with less uncertainty and avoid last-minute surprises.

If you're struggling with:

- Getting contradictory AI detector scores across platforms

- Worried about false positives and academic integrity flags

- Need a pre-check that matches institutional reality

- Your school uses Turnitin but you can’t access it directly

Here's how we'd coach you through it:

Send us your draft or your question. We'll walk you through:

- Turnitin AI detection report (institution-style)

- Pre-submission risk notes and revision direction

- Writing improvement guidance focused on clarity and evidence

- Rush support for tight deadlines (on request)